Embedded ethics: What if ethicists joined the teams developing your AI tools?

A Munich-based team proposes integrating ethicists directly into medical AI development teams. The idea is appealing—but is it enough? Analysis of an approach directly relevant to future AI-assisted psychotherapy tools.

Source analysée

https://pubmed.ncbi.nlm.nih.gov/35081955/The starting problem

Since 2018, more than 84 ethical charters for AI have been published by public and private organizations worldwide. All converge toward familiar principles derived from medical bioethics (Beauchamp & Childress, 1979). But what do these principles actually mean in practice?

Beneficence

Actively act for the patient’s good. For AI in mental health: the tool must produce a demonstrated clinical benefit, not just “do no harm.” A therapeutic chatbot that occupies the patient without improving their condition doesn’t meet this criterion, even if it causes no damage.

Non-maleficence

Do no harm—primum non nocere. In AI: avoid discriminatory biases, diagnostic errors, false negatives on suicide risk. More subtly: don’t create tool dependency, don’t delay access to a real therapist. Harm can be indirect and invisible.

Transparency

Make decision-making mechanisms visible and understandable. For AI: does the patient know they’re interacting with a machine? How does the algorithm determine its responses? What data was it trained on? Transparency is the foundation of informed consent—without it, the patient cannot choose.

Justice

Ensure equitable access and absence of discrimination. For AI: does the tool work equally well for populations underrepresented in training data? A suicide risk scoring algorithm trained primarily on white English-speaking populations structurally discriminates against minorities.

Responsibility

Being able to identify who answers for the consequences. For AI: when a chatbot gives inappropriate advice to a patient in crisis, who is responsible? The developer? The company? The clinician who recommended the app? The absence of a clear chain of responsibility is one of the most concerning blind spots.

The problem? These principles remain dead letter in development teams.

AI developers aren’t trained in ethics. Ethicists don’t work in tech companies. And when guidelines exist, no one knows concretely how to apply them in code, in interface, in architecture choices. Brent Mittelstadt, a researcher at Oxford, summarized the problem in 2019: AI development has neither shared objectives, nor professional standards, nor methods for translating principles into practice, nor accountability mechanisms.

Result: patients sometimes become “involuntary guinea pigs,” as shown by the case of an algorithm used on millions of American patients that proved to carry significant racial bias (Obermeyer et al., 2019).

It’s in this context that an interdisciplinary team from the Technical University of Munich (TUM) proposes a concrete solution: embedded ethics.

Embedded ethics in 3 minutes

The concept

Embedded ethics consists of placing one or more ethicists directly in the development team, from the project’s beginning until its deployment. The ethicist doesn’t intervene occasionally, in an ethics committee, after the fact. They participate in team meetings, co-write protocols, analyze design choices as they occur.

It’s not an audit. It’s continuous collaboration.

What it is not

| Approach | When? | Who? | Limitation |

|---|---|---|---|

| Ethical charters | Before the project | Management / policy | Too abstract for developers |

| Ethics committee | After design | External experts | Too late to change the product |

| Ethics training | During studies | Teachers | Insufficient for required expertise |

| Embedded ethics | Throughout the project | Ethicist in the team | Depends on power dynamics |

The 9 concrete recommendations

The article proposes a guidance table that every mental health tool developer should know:

Objectives

Anticipate, identify and address ethical issues at each development phase

Work in a collaborative and iterative manner with the technical team

Integration

Organize regular exchanges, formal or informal, between ethicists and developers

Practice

Make explicit the ethical theoretical frameworks used in analyses

Justify ethical positions in relation to the project’s specific objectives

Clearly establish the decision-making structure from the start

Make ethical analyses transparent, within confidentiality limits

Expertise and training

The embedded ethicist must have expertise in ethical analysis AND a basic understanding of the technology

Create opportunities for cross-training before and during the project

A concrete case that already exists

The approach is not purely theoretical. Since 2006, bioethicist Jeantine Lunshof has been integrated full-time into George Church’s laboratory at Harvard’s Wyss Institute (genomics, synthetic biology). She participates in lab meetings, co-authors publications, contributes to research protocol writing.

This model has been adapted for teaching: Harvard’s Embedded EthiCS program integrates philosophers into computer science courses—not as occasional speakers, but as co-teachers throughout the semester.

The Anthropic case. The company developing Claude (the AI we use for this site) has employed since 2021 Amanda Askell, a philosopher with a PhD from NYU in ethics, to lead the “personality alignment” team. Her role: define the character, values, and moral boundaries of the model—not after the fact, but from conception. This is a form of embedded ethics applied to conversational AI development. Askell was named to the TIME100 AI (2024) and profiled by the Wall Street Journal Magazine (February 2026) for this work. Proof that the model is taken seriously—at least by some actors.

The key question: can these models, developed in genomics, teaching, and by certain AI developers, be generalized to medical AI development—an environment subject to intense commercial pressure where patient stakes are direct?

What this changes for psychotherapy

Why this concerns us directly

AI tools in mental health are multiplying: therapeutic chatbots, session transcription, sentiment analysis, suicide risk scoring, inter-session tracking applications. Each of these tools is developed by technical teams who, most often, have no clinical psychologist on their development team, much less an ethicist.

Decisions made at the design stage determine the final product:

| Technical decision | Invisible ethical issue | Clinical consequence |

|---|---|---|

| Choosing training data | Which populations are represented? | Bias in detecting suffering in certain cultures |

| Defining suicide alert thresholds | Sensitivity vs. specificity | Anxiety-inducing false positives or dangerous false negatives |

| Designing chatbot interface | Level of artificial “warmth” | Risk of problematic attachment |

| Storing transcripts | Duration, location, access | Confidentiality of therapeutic content |

Each of these decisions is made before the first patient uses the tool. This is exactly when an embedded ethicist could intervene.

The ideal model for an AI-Psy tool

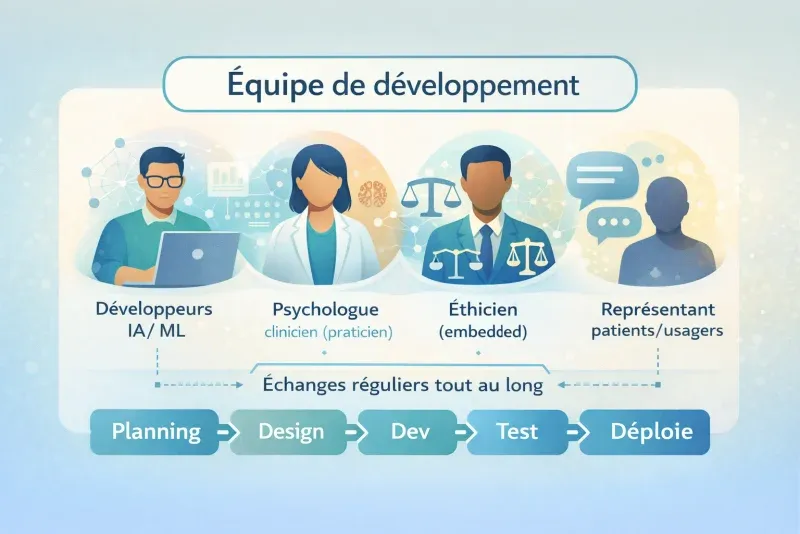

If we applied embedded ethics to developing an AI tool for psychotherapy, the team would look like this:

In this model, the ethicist isn’t there to block or validate. They’re there to make visible the issues no one else sees—because developers don’t have the training to spot them, and clinicians don’t have access to the development process.

What is solid in this proposal

The diagnosis is correct

Abstract ethical principles don’t spontaneously translate into development practices. This finding is widely shared in academic literature.

An operationalizable framework

The 9 recommendations are concrete, applicable, and adaptable to different development contexts.

Intellectual honesty

The authors themselves discuss the risk of ethics washing, power dynamics, and the necessity of binding measures. This is rare in this type of literature.

The team exemplifies what it advocates

6 co-authors from different disciplines (medical ethics, anthropology, philosophy, sociology of science, robotics). It’s not an ethicist speaking alone—it’s an interdisciplinary team that practiced what it recommends.

The limitations—and why they matter

No evidence that it works

This is the most troubling point. The article is a proposal, not an evaluation. No empirical data shows that embedded ethics produces better tools, less harm, or better ethical decisions. The only case cited (Lunshof/Harvard) concerns genomics, not medical AI.

Clinical analogy: it’s like a colleague proposing a new therapeutic approach saying “here’s the protocol, but we have no efficacy data.” We’d listen—but we wouldn’t adopt the approach without evaluation.

The power dynamics problem

The authors themselves mention the case of Timnit Gebru, an AI ethics researcher fired by Google after publishing critical results. But they don’t draw the consequences for their own proposal.

The uncomfortable question: What happens when the embedded ethicist identifies a problem that would cost millions to resolve—or that would call the product itself into question? Do they have veto power? Can they publish their findings? Can they resign without a non-disclosure agreement?

Without structural protection mechanisms, the embedded ethicist risks cognitive capture: through working with the team, they end up adopting its culture and losing their critical perspective. Every clinician knows this phenomenon—it’s exactly why supervision is mandatory in psychotherapy.

Imported rather than emergent ethics

The model rests on the idea that ethical competence is specialized knowledge held by experts and that it must be imported into technical teams.

We could conceive things differently. If we thought in terms of ecosystem—developers, clinicians, patients, regulators in constant interaction—ethical competence could emerge from these interactions rather than being imported by a specialist. It’s the difference between adding an ingredient to a recipe and creating the conditions for a flavor to develop on its own.

The blind spot of care ethics

This is perhaps the most surprising absence. In an article about ethics of medical AI, care ethics is never mentioned. What is it? Developed by Carol Gilligan (1982) then formalized by political philosopher Joan Tronto (1993), care ethics is a moral current that places the care relationship—not abstract principles—at the center of ethical reflection. Where principlism (Beauchamp & Childress) asks “what principles apply here?”, care ethics asks “what does this vulnerable person need, and how to respond in an attentive and competent manner?”

Tronto identifies four phases of care: attention (caring about—recognizing that a need exists), responsibility (taking care of—assuming responsibility to respond), actual care (care-giving—concrete competence in the act), and care reception (care-receiving—verifying that the need has been satisfied, from the beneficiary’s perspective). This framework is directly relevant for designing AI tools in mental health.

Concrete example:

Imagine an inter-session follow-up chatbot for depressed patients. With a principlist approach, ethical evaluation will verify: is consent informed? Is data protected? Is the tool equitable? With a care approach, we would additionally ask: does the chatbot detect when the user is in increased distress and adapt its response? (attention). Who takes responsibility for verifying that the chatbot doesn’t worsen the patient’s isolation? (responsibility). Is the chatbot truly competent for this type of suffering, or does it give generic responses? (actual care). And especially: have patients themselves been asked if the tool does them good—or if it simply gives them the impression of not being alone? (care reception).

For psychotherapy—where the relationship is the care—this perspective seems unavoidable. Principlism thinks of the moral agent as an autonomous individual facing universal principles. Care ethics thinks of them as a being in relationship, whose responsibility emerges from the bond with others. Not integrating this dimension into mental health tool design is like building a bridge by only calculating material resistance, without asking who will cross it and in what state.

Three alternative approaches to know

Embedded ethics is not the only answer to the problem. Here are three complementary approaches that any professional interested in AI in psychotherapy should know:

Value-Sensitive Design (Batya Friedman)

Integrate human values into design through conceptual, empirical, and technical investigations. Very structured methodology, developed over 30 years.

Technology virtue ethics (Shannon Vallor)

Develop good moral dispositions (prudence, justice, courage) in developers themselves, rather than relying solely on processes.

Binding regulation (EU AI Act)

Impose legal obligations of transparency, evaluation, and accountability. Only approach with force of law.

None of these approaches is sufficient alone. It’s probably a combination—embedded ethics + regulation + virtue training + participatory design including patients—that will produce the best results.

Open questions for research

McLennan et al.’s article opens more questions than it resolves. Here are those we find most urgent for the psychotherapy field:

- Does embedded ethics produce measurably safer or more ethical AI tools? What indicators to use?

- How to evaluate the “ethical quality” of a mental health tool—beyond regulatory compliance?

- What institutional mechanisms protect the embedded ethicist against commercial pressure? Is the clinical supervision model transposable?

- Does the embedded ethicist develop “cognitive capture” comparable to institutional countertransference?

- Does care ethics offer a framework more suited than principlism for AI psychotherapy tool design?

- How to integrate patient voices—not just those of expert ethicists—into the development process?

- Do clinical psychologists have a specific role to play in embedded ethics, distinct from that of professional ethicists?

Our position

Embedded ethics is a real advance in thinking about integrating ethics into technological development. The diagnosis is correct: principles alone are not enough, and the ethics committee that intervenes after design is too late. The proposal to place ethicists in teams, from the beginning, is concrete and applicable.

But the approach remains insufficient if it’s the only line of defense. Power dynamics, commercial pressure, and the risk of cognitive capture are not resolved by interdisciplinary good will. Structural mechanisms—regulation, independent audits, user inclusion—are indispensable.

For AI-assisted psychotherapy, we retain three lessons:

Demand transparency on the development process

When evaluating an AI tool for your practice, ask if clinicians and/or ethicists participated in its development—not just its marketing.

Ethical competence is not reserved for ethicists

As a clinical psychologist, your training in relationship, informed consent, vulnerability management, and self-observation (supervision) gives you ethical expertise directly mobilizable to evaluate these tools.

Don’t delegate your judgment

Not to abstract ethical principles, nor to distant committees, nor to embedded ethicists you’ll never see. Ethical responsibility remains yours—it’s both a burden and a competence.

Analyzed reference: McLennan, S., Fiske, A., Tigard, D., Müller, R., Haddadin, S., & Buyx, A. (2022). Embedded ethics: a proposal for integrating ethics into the development of medical AI. BMC Medical Ethics, 23(6). https://doi.org/10.1186/s12910-022-00746-3

Supplementary readings:

- Mittelstadt, B. (2019). Principles alone cannot guarantee ethical AI. Nature Machine Intelligence, 1, 501-507. https://doi.org/10.1038/s42256-019-0114-4

- McLennan, S. et al. (2024). Embedded Ethics in Practice: A Toolbox for Integrating the Analysis of Ethical and Social Issues into Healthcare AI Research. Science and Engineering Ethics. https://doi.org/10.1007/s11948-024-00523-y

- Vallor, S. (2016). Technology and the Virtues: A Philosophical Guide to a Future Worth Wanting. Oxford University Press.

- Obermeyer, Z. et al. (2019). Dissecting racial bias in an algorithm used to manage the health of populations. Science, 366(6464), 447-453. https://doi.org/10.1126/science.aax2342

Concepts discussed

Definitions and key concepts mentioned in this article.