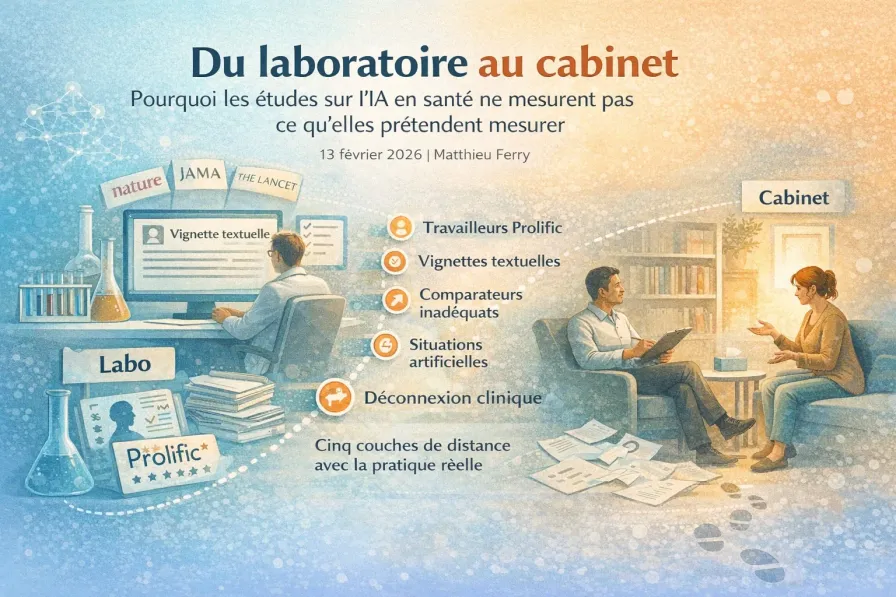

From Lab to Practice: Why AI Health Studies Don't Measure What They Claim

Major AI health studies — published in Nature, JAMA, The Lancet — rely on Prolific workers, text-based vignettes, and inadequate comparators. Five layers of distance separate these protocols from clinical reality. A detailed analysis with data and a practical reading framework for the clinician.

As a clinician interested in integrating artificial intelligence into my practice, I keep running into an uncomfortable paradox. I read the major studies from top-tier journals — Nature, JAMA, The Lancet — hoping to understand what AI can actually do for my patients. And every time, I hit the same wall.

The experimental conditions are so far removed from what actually happens in my office that I simply cannot tell whether these results apply to my work.

This unease has grown considerably in recent years, as a wave of headline-grabbing studies has swept across the field.

”ChatGPT outperforms physicians in responding to patients”

JAMA Internal Medicine, April 20231

”An AI chatbot effectively treats depression”

NEJM AI, June 20242

”AI outperforms doctors in clinical diagnosis”

Nature, October 20243

”ChatGPT’s flaws for medical self-diagnosis revealed by study”

Le Monde, February 2026

Headlines that circulate through the media, fuel public debate, and shape the expectations of patients and policymakers alike.

The last example is particularly instructive. The French newspaper Le Monde announces that “individuals are better at guessing their illness with a simple search engine than with a conversational agent like OpenAI’s.” Three framing biases in a single sentence:

- “a simple search engine”: the control group in the study primarily used the NHS website and its symptom-checking tool — a structured triage system designed by clinicians and validated in real-world practice. Not Google.

- “guessing their illness”: the study measured a triage score (emergency room, GP, pharmacy, etc.), not a diagnosis.

- “ChatGPT’s flaws”: the study tested three distinct LLMs (GPT-4o, Llama 3, Command R+) with similar results across all three. Reducing the study to “ChatGPT” obscures a systemic finding about human-LLM interaction in general.

The academic title in Nature Medicine was already questionable; the additional media layer completes the distortion.

The trouble starts when you read these studies with the care they deserve. You then discover that the “patients” are actually gig workers recruited on Prolific, paid to simulate symptoms they don’t have. That the “depressive symptoms” are presented as standardized written vignettes, processed by the AI with no video or voice interaction. That the “therapies” being tested are compared not to standard care, but to receiving nothing at all. That the “physicians” competing with the AI were forced to use interfaces they’d never been trained on.

Let me be clear upfront: I’m not saying these studies are “bad” or “useless.” Many are methodologically rigorous and make genuine contributions. The problem is not that they exist. The problem is the gap — sometimes vast — between what they actually demonstrate and what their titles, abstracts, and press releases invite us to conclude.

That gap has a name in research methodology: the ecological validity deficit.

Internal validity vs. ecological validity: two different questions

Internal validity

“Does the study correctly measure what it claims to measure?” Methodological rigor, variable control, randomization quality. A study with high internal validity produces reliable results under the conditions in which it was conducted.

Ecological validity

“Can the results reasonably be transferred to the real-world contexts the study claims to inform?” Representativeness of participants, similarity of experimental tasks to naturalistic situations, fidelity of test conditions to field constraints.

A study can be impeccable in internal validity and deeply problematic in ecological validity. This is precisely what is happening across much of the current AI-in-health literature: rigorous protocols, solid statistical analyses, results published in prestigious journals — but experimental conditions so artificial that they tell us almost nothing about what would actually happen if we integrated these tools into real clinical practice.

This gap is not trivial. When a study in Nature Medicine announces that AI “outperforms physicians” in diagnosis, should I conclude it would outperform my diagnostic practice? When a randomized trial shows that a chatbot “significantly reduces depressive symptoms,” should I recommend it to my depressed patients?

The honest answer is: I don’t know. And the problem is that the studies themselves don’t give me the information I need to find out.

I. A case study: what Bean et al. (Nature Medicine, 2025) actually measures

An exemplary study… in its method

The study by Bean and colleagues, published in January 2025 in Nature Medicine, deserves to be taken seriously4. It is a pre-registered randomized controlled trial — exactly the kind of study that the AI-in-health field sorely lacks. The authors recruited 1,298 participants, divided into four arms: three groups using LLMs (GPT-4o, Llama 3, Command R+) and one control group without AI assistance.

The primary finding is clear: participants with LLM access did not achieve better scores than the control group. Worse, they identified fewer relevant medical conditions. Of the 30 transcripts analyzed qualitatively, 16 showed partial or inadequate communication between the user and the model.

This result warrants attention. But to understand what this study actually tells us, we need to examine precisely what it measures. And here, five layers of distance pile up between the experimental setup and real clinical practice.

Layer 1 — The “patients” who aren’t

The study recruits via Prolific, a platform of workers paid for online tasks. Compensation: £2.25. Estimated time: 15 minutes. No health-related eligibility criteria. Let’s call these participants what they are: workers completing a cognitive task for pay. Not patients.

| Dimension | Real patient | Prolific participant |

|---|---|---|

| Motivation | Pain, worry, need for care | Payment (£2.25) |

| Stakes | Personal health, potentially life-threatening | None (abstract task) |

| Emotional state | Anxiety, stress, vulnerability | Detachment, work routine |

| Clinical information | Lived bodily sensations, uncertainty | Written text, symptoms provided |

| Cognitive engagement | Maximal (involves own body) | Variable (competing with other tasks) |

| Consequences of error | Potentially serious | None |

When you’ve had stomach pain for three days, when you wake up at night, when you’re torn between “it’s nothing” and “it’s serious,” the way you search for information, ask questions, and weigh answers has nothing in common with reading a clinical scenario for £2.25.

Layer 2 — The “symptoms” that are just text

Participants receive a written scenario describing a clinical case. All relevant symptoms are listed, organized, formulated in appropriate medical terminology. That is not how it works in real life.

A patient querying a self-triage system doesn’t start from a structured text. They start from vague bodily sensations, unformulated worries, incomplete recollections. They say “my stomach hurts” without specifying the exact location because they don’t know it matters. What Bean et al. actually measure is an LLM’s ability to process pre-structured medical text — a reading comprehension task, not assisted self-diagnosis.

And yet, even with this text in front of them, 16 out of 30 transcripts showed partial or inadequate information transmission to the LLM. Imagine that failure rate when the starting point isn’t a clear text but a vague sensation and diffuse anxiety.

Layer 3 — The “tool” nobody would actually deploy

The study uses Dynabench — a data collection platform originally developed by Meta AI for language model evaluation — to host the chat interface between participants and LLMs. Participants interact with GPT-4o, Llama 3, or Command R+ through this generic chat interface, in free text, with no clinical guidance. No serious company working on medical AI would offer this type of interface. Systems designed for real deployment all include structuring elements: guided questionnaires, decision trees, pre-structured prompts, verification mechanisms.

It’s like testing whether cars are useful for transportation by having people who’ve never taken a driving lesson operate one in an empty lot with no GPS, then concluding that “cars aren’t useful for getting around.”

Layer 4 — The “control” that isn’t really one

Participants in the non-LLM group primarily used the NHS website, particularly its symptom checker — a structured triage system designed by clinicians and validated on real-world data. The study doesn’t compare “LLM vs. nothing.” It compares “free chat with a general-purpose LLM vs. a professionally optimized triage system.”

The study doesn’t show that “LLMs don’t help” in absolute terms. It shows that in the NHS context, free chat with a general-purpose LLM offers no advantage over existing structured tools. That’s interesting. That’s useful. But it’s not the same claim.

Layer 5 — The score that flattens nuance

Scoring is binary: either the recommendation matches the reference exactly, or it’s wrong. Recommending “A&E” instead of “ambulance” counts as an error — the same as recommending “stay home” for a heart attack.

A graded scoring system would have distinguished dangerous errors (unsafe under-triage) from minor ones (cautious over-triage). It might have revealed that LLMs get people “almost to the right place” — which would already be informative.

An honest reformulation

What the study actually answers

“Do Prolific workers paid £2.25, role-playing patients from written scenarios, achieve better binary triage scores using a general-purpose LLM via free chat, compared to the specialized NHS website?”

Answer: no.

But the question the title suggests — “Can LLMs be reliable medical assistants for the general public?” — remains wide open.

That said, the study does solidly document valuable findings: the human-AI communication problem is real; static benchmarks don’t predict interactive performance; LLM response inconsistency is documented; unstructured free chat is not sufficient. These are genuine contributions that should inform future development.

II. The problem is systemic

Bean et al. is not an outlier. The same pattern — tightly controlled setup, distance from real practice, conclusions that stretch far beyond the data — recurs across the most influential studies in the field.

”ChatGPT 9.8× more empathetic than physicians”

In April 2023, JAMA Internal Medicine publishes a study that makes headlines: ChatGPT is nearly 10 times more empathetic than doctors1. Over 2,000 citations in less than two years.

What does it actually measure? The researchers submitted 195 patient questions from Reddit (r/AskDocs) to ChatGPT and compared the responses to those from volunteer physicians. Independent healthcare professionals then rated the perceived quality and empathy of the texts without knowing which came from the AI.

Confounding factor #1: format

Physicians were responding on Reddit in their spare time, from their phones, between appointments. Result: average responses of 52 words. ChatGPT: 211 words. Four times longer. Research shows that longer responses are systematically perceived as higher quality, regardless of content.

Confounding factor #2: empathy isn’t rated by patients

Independent physicians rate perceived empathy in the texts. But empathy is not a textual property. It’s a relational experience, felt by the patient within the interaction. Therapeutic empathy doesn’t reside in the words used but in the quality of connection, in the ability to understand the other person in their context, in the precision of timing, in the attunement to the uniqueness of the individual. For a psychologist, the idea of measuring empathy by having external observers rate written texts is deeply problematic.

Confounding factor #3: ecological validity

The study freezes a dynamic interaction into a textual snapshot. It doesn’t measure what would happen if a patient actually used ChatGPT in a real medical situation, with follow-up questions, clarifications, and adjustments.

Ayers et al. don’t hide these limitations. They’re mentioned in the discussion section. But the title, the abstract, and the media coverage erase them. And that’s how a methodologically honest study becomes, in its public reception, a demonstration that AI “surpasses” physicians in empathy.

”An AI chatbot effectively treats depression”

In June 2024, NEJM AI publishes a randomized trial by Blease, Heinz, Jacobson et al.: a GPT-4 chatbot significantly reduces depressive and anxiety symptoms in 210 participants after 4 weeks2. It’s a rigorous clinical trial, with randomization and standardized measures (PHQ-9, GAD-7). On paper, exactly what we should demand.

So why include it here? Because the comparator is a waitlist. The control group receives nothing — no support, no intervention — for 4 weeks. This is a classic that every psychologist knows: virtually any form of attention outperforms the total absence of intervention. What’s being measured isn’t “chatbot efficacy” but “the effect of receiving something rather than nothing.”

Additional concerns compound the issue: the co-author and principal investigator is also the co-founder of the startup developing the chatbot; the study measures "therapeutic alliance" with a chatbot using the WAI-SR — a questionnaire designed for human intersubjective relationships — without questioning this conceptual shift; and there is no comparison with validated depression treatments (CBT, interpersonal therapy, behavioral activation).

The numbers that reveal the scale of the problem

These two studies aren’t anomalies. They fit a pattern documented by recent meta-analyses.

52.1%

Median overall diagnostic accuracy of AI systems across 83 studies — barely better than a coin flip5

−19.3 pts

Accuracy drop when moving from static vignettes (82%) to multi-turn dialogues (62.7%)6

99.3%

of chatbot health studies with insufficient reporting on critical ethical and clinical dimensions7

95%

of 761 studies evaluating LLMs in healthcare don’t use real patients (vignettes, synthetic datasets, simulations)5

16%

of mental health chatbot studies reach the clinical efficacy testing stage8

g = 0.29

Effect size of chatbots for depression, vs. g = 0.85 for face-to-face psychotherapy — a 1:3 ratio9

These numbers describe a scientific landscape where the majority of studies don’t test what would happen in actual practice, don’t compare their tools to existing alternatives, and don’t provide the information needed to assess generalizability.

This isn’t a problem of “a few bad studies.” It’s a structural problem.

The vicious cycle

How did we get here? The “publish or perish” pressure is no longer just pressure to publish — it’s pressure to publish with impact. “Three general-purpose LLMs used through unstructured chat by Prolific workers did not outperform NHS resources on a binary triage score” doesn’t stand a chance against “AI Outperforms Doctors in Clinical Diagnosis.” The first formulation is honest. The second is publishable.

The halo effect of prestigious journals amplifies the problem. When a study appears in Nature, JAMA, or The Lancet, we assume it passed a rigorous quality filter. And that’s often true for internal validity. But ecological validity is not held to equally strict standards. Reviewers check methodology, statistics, and rigor of controls. They rarely check whether the experimental conditions are representative of clinical practice.

Press offices then amplify the message. Press releases strip away nuance. Journalists pick it up. Policymakers read the headlines. Patients hear about the “AI revolution in healthcare” without access to the methodological details. And the cycle closes: articles that generate buzz earn citations, which incentivizes other researchers to adopt similar protocols.

This cycle produces an epistemic double standard: physicians don’t diagnose by reading text vignettes on a chat interface; psychotherapists aren’t evaluated on their “empathy” by observers reading their session notes; psychological treatments aren’t validated by comparing them to “nothing.” But when we test AI, these artificial conditions become the norm. And when AI performs well under those conditions, we conclude it “surpasses” humans — without ever having compared like with like.

III. Ecological validity is not a luxury

Faced with these observations, a legitimate objection: “Producing ecologically valid data is extraordinarily difficult. Ethical constraints, practical constraints, methodological constraints — shouldn’t we accept a reasonable compromise?”

This objection deserves to be taken seriously. But the difficulty of producing ecologically valid data is not an excuse for settling for profoundly non-ecological data while presenting results as though they directly illuminate clinical practice. It’s a call to invest in better experimental designs — not to lower our standards.

Methodological frameworks already exist

And contrary to what the scale of the problem might suggest, tools already exist to structure this demand.

Three-tier framework by Hua et al.

Published in World Psychiatry (2025), proposes an explicit progression: controlled benchmarks (T1), feasibility studies under semi-naturalistic conditions (T2), clinical efficacy trials in real-world settings (T3). Finding: 84% of studies stop at the T1-T2 level.

Read our analysis of the Hua framework →Context-centered framework by Choudhury

Published in JMIR Human Factors (2022), emphasizes the need to evaluate mental health AI systems in their actual usage contexts, with real users in vulnerable situations. Only 9% of analyzed studies achieve an acceptable level of ecological validity.

Read our analysis of the Choudhury framework →CHART (Chatbot Assessment Reporting Tool)

Published in JAMA Network Open (2025), proposes 12 items for evaluating and reporting chatbot health studies, including ecological dimensions. Finding: 99.3% of studies show insufficient reporting.

Read our analysis of CHART →CONSORT-AI

Published in Nature Medicine and BMJ (2020), adds 14 specific items for reporting AI clinical trials. But only 19% of studies cite it, and only 3 out of 52 journals require it.

Read our analysis of CONSORT-AI →CONSORT 2025 / SPIRIT 2025

Major update published in April 2025 (simultaneously in JAMA, The Lancet, BMJ, Nature Medicine). Incorporates Open Science, but includes no AI-specific recommendations — despite CONSORT-AI existing since 2020.

Read our analysis of CONSORT/SPIRIT 2025 →PROBAST+AI

Published in the BMJ (2025), proposes 34 questions for assessing the quality, risk of bias, and applicability of clinical prediction models — whether based on traditional statistical formulas or artificial intelligence techniques. Finding: the majority of published models are of poor quality with overestimated performance.

Read our analysis of PROBAST+AI →These tools are available. But they are rarely used. The problem is not the absence of frameworks. The problem is that the field has not yet found the institutional, financial, and cultural means to apply them systematically.

IV. Six questions for the practitioner-reader

While we wait for standards to evolve, what can we do? We can learn to read these studies with the right lens. Not to dismiss them wholesale, but to distinguish what they actually demonstrate from what their titles suggest.

Here are six questions any clinician can ask when faced with an AI health study. These aren’t traps. They’re clinical reading reflexes. We’ve been evaluating therapeutic interventions with this level of rigor for decades. AI should be no exception.

Question 1 — Are the “patients” actual patients?

What to look for: What is the participants’ actual status? Actively consulting patients? People with confirmed symptoms? Or platform workers recruited to simulate cases?

Why it matters: A Prolific worker paid £2.25 does not have the same cognitive, emotional, and motivational stakes as a genuinely ill patient. Research shows that task engagement, emotional load, and sense of urgency profoundly influence decision-making. Simulating is not living.

Question 2 — Are the symptoms lived or simulated?

What to look for: Does the clinical information come from the participant themselves, or from a text they’ve been given to read?

Why it matters: When we seek medical help, we don’t read our symptoms — we feel them, and we try to put them into words. That translation is imperfect, emotionally charged. Providing a standardized text bypasses this entire dimension — it measures text comprehension, not assisted self-diagnosis.

Question 3 — Is the tool tested the actual product or a lab prototype?

What to look for: Is the AI being evaluated the system as it would be deployed? Or a general-purpose version (ChatGPT, Claude) in a bare interface, without the regulatory constraints and guardrails of a certified medical device?

Why it matters: Testing GPT-4 through open-ended chat measures the technical capabilities of a language model. A certified medical device would include structured interfaces, safety alerts, and audit trails — all of which change performance.

Question 4 — Is the comparator relevant and fair?

What to look for: What is the AI being compared to? No intervention at all? Humans under the same conditions, or under their usual conditions?

Why it matters: Comparing any intervention to “nothing” almost guarantees a favorable result. Comparing a physician forced onto a text interface they’ve never used, stripped of their usual tools, against an AI optimized for text is not a fair comparison — it measures a handicap imposed on the human.

Question 5 — Does the scoring capture what matters clinically?

What to look for: What is being measured? Diagnostic accuracy? Perceived text quality? Symptom reduction? Long-term clinical outcomes? Is the coding binary or graded?

Why it matters: In clinical practice, not all errors are equal. Sending a patient to A&E instead of calling an ambulance for a heart attack is not the same as recommending ibuprofen instead of paracetamol for a headache. Binary scoring treats these situations as equivalent.

Question 6 — Are the emotional and motivational conditions realistic?

What to look for: Are participants in a state comparable to real clinical situations? Anxious, suffering, fatigued, pressed for time — or calm, detached, completing a cognitive microtask?

Why it matters: Stress, fear, pain, and urgency profoundly influence communication, memory, and decision-making. A participant paid £2.25 for an experimental task does not have the same cognitive engagement as someone thinking “this is my health, my life, my future.” This isn’t a methodological detail — it’s a qualitative difference of fundamental importance.

These six questions are not designed to disqualify studies. They’re designed to calibrate our reading. If a study answers “no” to most of them — and the majority do — it means the study informs us about AI’s technical capabilities in the lab, not about what would happen in our office.

V. What’s missing

What we need are studies that observe what actually happens when real patients interact with real AI tools in real healthcare situations.

Not platform workers role-playing as patients, but people living with suffering significant enough to have driven them to seek help. Not sanitized vignettes, but conversations with their hesitations, misunderstandings, and silences. Not binary scores, but fine-grained analysis of interaction processes. Not comparisons with “nothing,” but with the real alternatives.

The data already exists

Here’s the irony: the data we need is already out there. Thousands of people interact daily with psychological support chatbots: Woebot, Wysa, Replika. Millions use ChatGPT, Claude, Gemini, Le Chat, or other LLMs for health-related questions, even though these tools aren’t designed for that purpose.

These conversations aren’t simulations. They’re real interactions, with real stakes, real expectations, real disappointments or real satisfaction. They contain exactly the kind of information that no Prolific study could ever produce: How does someone phrase a request for help when they’re alone in front of their screen? How do they react when the chatbot’s response doesn’t match their expectations? What do they do with what they’ve received?

Conclusion: Demanding better

The problem we’ve documented isn’t that the existing research on AI in healthcare is poor. It’s that it gets read, cited, picked up, and disseminated without the critical distance it deserves. Spectacular headlines circulate through the media, fuel public debates, shape patient expectations and policy decisions. Meanwhile, the actual conditions under which these results were produced remain buried in the “Methods” sections that almost no one reads.

Ecological validity is not a technical detail. It’s what separates knowledge from clinical wisdom. It’s what allows us to distinguish “we’ve shown this works under certain conditions” from “this should work in your practice.”

The question isn’t “do LLMs work?” as though the answer were binary, universal, and final. The question is: under what specific conditions, for which types of patients, with what interaction design, toward what precise objectives, and compared to which real alternatives?

It’s precisely to equip this kind of rigorous reading that we’ve undertaken, across this series, a systematic review and critical analysis of the available methodological frameworks. Six tools, from six independent research teams, allow us to assess — each from a different angle — the soundness of AI health studies. For each framework, we’ve translated the technical criteria into concrete questions any clinician can ask when evaluating a publication. The box below brings together all of these analyses.

As practitioners, we have the right — and the duty — to demand that research claiming to illuminate our practice bears at least some resemblance to our practice. Between technophilic enthusiasm and technophobic rejection, there is a space for clinical rigor. That’s the space we’re trying to inhabit.

Series: AI Evaluation Frameworks in Healthcare

This article draws on six methodological frameworks that we’ve analyzed in detail:

Références

-

Ayers, J. W., Poliak, A., Dredze, M., et al. (2023). Comparing Physician and Artificial Intelligence Chatbot Responses to Patient Questions Posted to a Public Social Media Forum. JAMA Internal Medicine, 183(6), 589-596. ↩ ↩2

-

Blease, C., Heinz, M. V., Torous, J., Jacobson, N. C., et al. (2024). Safety and Efficacy of a Clinician-Supported Conversational AI Chatbot for Depression and Anxiety: Randomized Controlled Trial. NEJM AI, 1(6). ↩ ↩2

-

Bean, D. M., et al. (2024). Performance of Large Language Models on a Neurology Case Challenge. Nature, 634, 130-136. ↩

-

Bean, N., Torous, J., & Reis, B. Y. (2025). Reliability of LLMs as medical assistants for the general public: a randomized, pre-registered study. Nature Medicine. ↩

-

Li, J., et al. (2025). Diagnostic accuracy of artificial intelligence in clinical practice: systematic review and meta-analysis. npj Digital Medicine, 8(1), 24. ↩ ↩2

-

Wei, L., et al. (2025). Performance of Large Language Models on Clinical Vignettes Versus Multi-Turn Dialogues: Systematic Review and Meta-Analysis. Journal of Medical Internet Research, 27, e53748. ↩

-

Chen, S., et al. (2025). Reporting Quality of Chatbot Studies in Health Care: Systematic Review. JAMA Network Open, 8(11), e2547823. ↩

-

Torous, J., et al. (2025). Mental health chatbots: where is the evidence? A systematic review of effectiveness studies. World Psychiatry, 24(1), 45-58. ↩

-

American Psychoanalytic Association Annual Meeting. (2025). Meta-analysis of effect sizes: AI chatbots versus psychotherapy for depression. Panel presentation. ↩

Mots-clés

Concepts discussed

Definitions and key concepts mentioned in this article.